Hours of Fun Creating Visual Art with Prompts

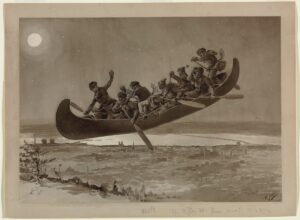

“koala bear eating eggs benedict in a bistro” is the prompt I entered into OpenAI’s DALL·E system to generate this image. I have been reading articles about AI image generation since DALL·E 2 launched earlier this year, and have been experimenting with it hands-on since I received access earlier this week. It is lots of fun, but doesn’t always generate the results you might expect. I’ve been trying to describe to it artist Henri Julien’s Chasse-gallerie, a drawing of 8 voyageurs flying in a canoe at night, and DALL·E struggles with the flying canoe. For each prompt, DALL·E initially creates 4 image variations - I’ve selected the most interesting one for each below.

La Chasse-galerie by Henri Julien

4 men in a flying canoe at night, generated by DALL·E

4 men paddling a flying canoe through the sky at night, generated by DALL·E

4 men in a canoe in the sky at night, generated by DALL·E

lumberjacks flying in a canoe past the moon, by DALL·E. I like how they are chopping the tree while flying the canoe.

Generating Images on your PC

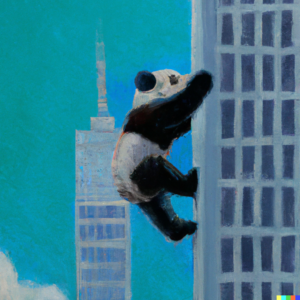

There are models that exist that you can run on your home PC. I’ve checked out min-dalle (used by https://www.craiyon.com/) and stable-diffusion (demo). They can both create imagery that roughly matches my prompts, which is amazing. I found that the output from min-dalle was a bit crude, with distorted features. Output I’ve generated from stable-diffusion is much better.

For me, quality really makes a difference in how much I get out of the tool. Generating low quality images isn’t as much fun. Perhaps this is a reflection of my artistic skills - I can make a crappy drawing of a panda climbing with a ball point pen while watching a Powerpoint in a random meeting. But I personally don’t have the skill to render my ideas as well as DALL·E - maybe that’s what makes it so fascinating.

A painting of a panda climbing a skyscraper, generated by DALL·E mini

A painting of a panda climbing a skyscraper, generated by stable-diffusion

A painting of a panda climbing a skyscraper, generated by DALL·E

Not all fun and games

As much fun as this is, there is a lot of controversy about the implications of making tools like this accessible.

NBC News actually has a pretty good article about the biases exhibited by DALL·E. For example, ‘a flight attendant’ only produces images of women. In this respect, it is very much like other tools currently in the marketplace - I was curious, and searched Getty Images for ‘flight attendant’ stock photos, and found only women in the top results. Image generation tools continue to propagate the bias problems we see everywhere.

An Atlantic columnist raises some other interesting points about the backlash he faced when he used generated images in his newsletter, as opposed to professional illustration that you might see in a magazine feature. Here are some further thoughts on the ideas he presented:

- Using a computer program to illustrate stories takes away work that would go to a paid artist, “AI art does seem like a thing that will devalue art in the long run” - I almost wonder here, if the computer program just becomes another tool in the artist’s arsenal. Is the value of an illustrator in the mechanics of creating an image, or visually conveying an idea? What if we think of a program like DALL-E as a creative tool, like Photoshop. Did Photoshop devalue photography?

- There is no compensation or disclosure to the artists who created the imagery used to train these art tools - “DALL-E is trained on the creative work of countless artists, and so there’s a legitimate argument to be made that it is essentially laundering human creativity in some way for commercial product.” - At primary school age, my children brought home art created using pointillism techniques, without compensating or disclosing inspiration from Georges Seurat and Paul Signac. Popular image editing packages have had Van Gogh filters for years. We all learn and build on the work of those who preceded us. Once a style or idea is presented to the world, who owns it? Is the use case of training an algorithm a separate right, different from training an artist?

There are also challenges with image generation tools facilitating the creation of offensive imagery and disinformation, making it easier to cause harm than it is today with existing tools like Photoshop.

These tools will continue to progress, and will create change in ways, and on a scale that are hard to predict. It remains to be seen if we collectively decide to put new controls in place to address these challenges. In the meantime, I’ll be generating imagery of pandas and koalas in urban environments.